Category: EEG

-

Tattoo electrodes for long-term EEG, MEG measurements

Graz University professor Francesco Greco has built on his earlier work to create advanced inkjet printed conductive polymer electrodes on tattoo paper. The composition and thickness of the transfer paper and conductive polymer have been optimized to achieve a better electrode/skin connection and improve EEG signal quality. The cheap, user-friendly, dry electrodes have shown similar…

-

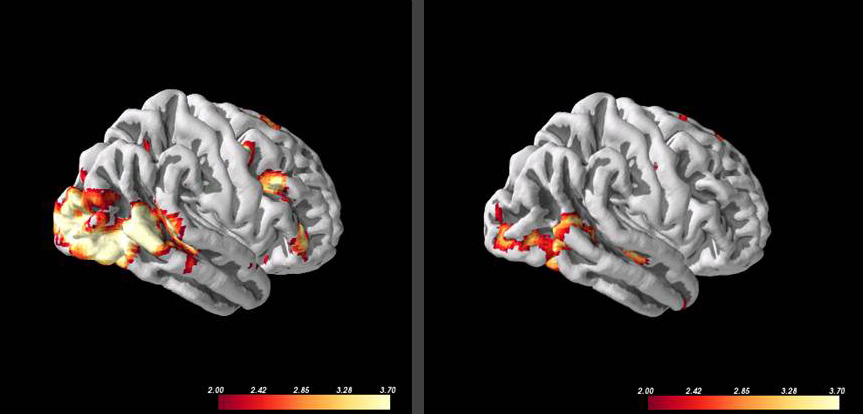

EEG identifies cognitive motor dissociation

Nicholas Schiff and Weill Cornell colleagues have developed an EEG-based method for measuring the delay in brain processing of continuous natural speech in patients with severe brain injury. Study results correlated with fMRI obtained evidence, commonly used to identify the capacity to perform cognitively demanding tasks. EEG can be used for long periods, and is cheaper and…

-

Nathan Intrator on epilepsy, AI, and digital signal processing | ApplySci @ Stanford

Nathan Intrator discussed epilepsy, AI and digital signal processing at ApplySci’s Wearable Tech + Digital Health + Neurotech Silicon Valley conference on February 26-27, 2018 at Stanford University: Join ApplySci at the 9th Wearable Tech + Digital Health + Neurotech Boston conference on September 24, 2018 at the MIT Media Lab. Speakers include: Mary Lou…

-

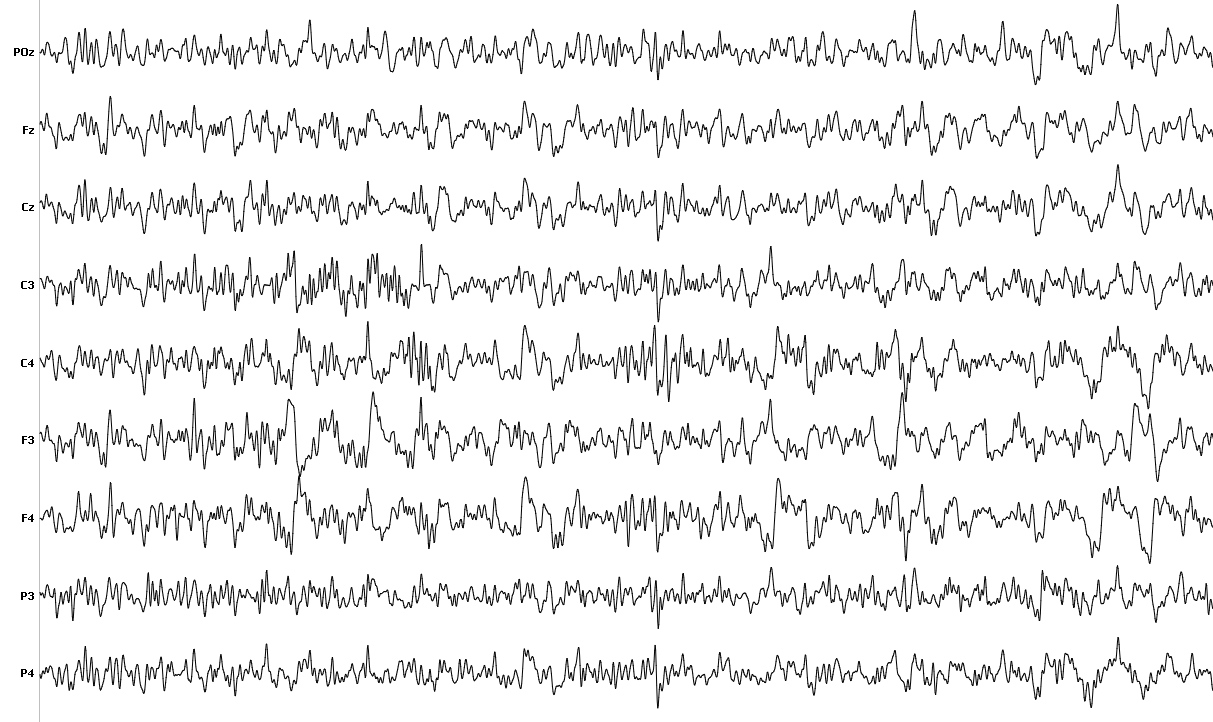

EEG + AI assists drivers in manual and autonomous cars

Nissan’s Brain-to-Vehicle (B2V) technology will enable vehicles to interpret signals from a driver’s brain. The company describes two aspects of the system — prediction and detection, which depend on a driver wearing EEG electrodes: Predicton: By detecting, via the brain, that the driver is about to move, including turning the steering wheel or pushing the…

-

EEG detects infant pain

Caroline Hartley and Oxford colleagues studied 72 infants during painful medical procedures. Using EEG, they found a signature change in brain activity about a half-second after a painful stimulus. They seek to understand its use in monitoring and managing infant pain, as well as the use of EEG in adult pain treatment. EEG is more precise than current heart rate,…

-

Mobile brain health management

After scanning the brains of ALS, epilepsy, minimally conscious, schizophrenia, memory impaired, and healthy patients, to monitor brain health and treatment effectiveness, Brown and Tel Aviv University professor Nathan Intrator has commercialized his algorithms and launched Neurosteer. At ApplySci’s recent NeuroTech NYC conference, Professor Intrator discussed dramatic advances in BCI, monitoring, and neurofeedback, in his…

-

EEG “password” uses stimulus response to confirm identity

Binghamton researchers have developed an EEG “brainprint” system that can identify people with 100 per cent accuracy, according to a recent study. A brain-password is recorded when a user’s stimulus response activity is recorded via EEG. Identity is then confirmed by exposing the user to the same stimulus, recording their response, and using a pattern…

-

Brain reactions could replace passwords

Binghamton professors Sarah Laszlo and Zhanpeng Jin believe that they can verify a person’s identity by using EEG to monitor the way brains respond to words. Their Neurocomputing paper puts forth the view that thoughts can replace passwords. In April, 2013, ApplySci described a similar study by Berkeley‘s John Chuang. The researchers observed brain signals of 45 volunteers…

-

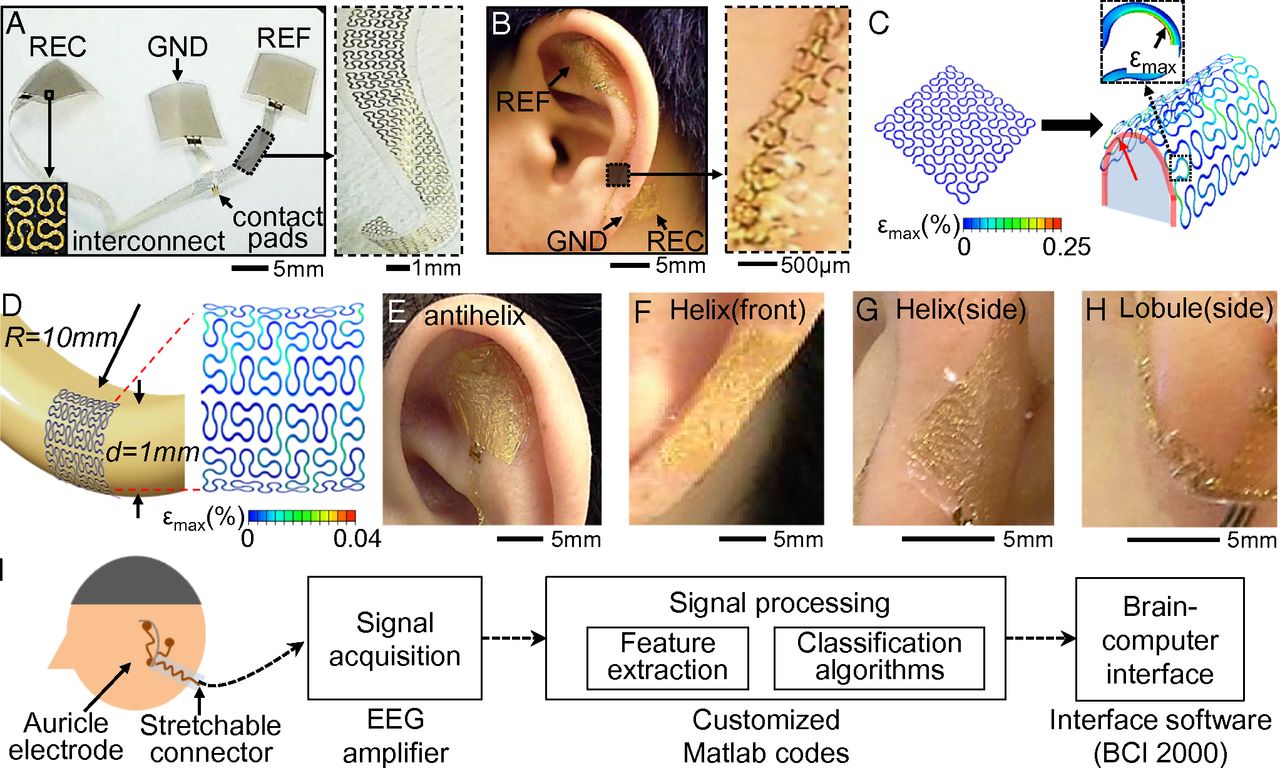

Discreet EEG sticker monitors brain activity

University of Illinois professor John Rogers has made another breakthrough in flexible medical electronics. His team has created an EEG system that sticks to the skin behind one’s ear to monitor brain activity. The miniature, lightweight, gold electrode device sticks to the skin without adhesive, and can be worn continuously for 2 weeks. While not yet…

-

Home-based autism therapies

The MICHELANGELO project creates home-based solutions for assessing and treating autism, including: Pervasive, sensor-based technologies to perform physiological measurements such as heart rate, sweat index and body temperature Camera-based systems to monitor observable behaviors and record brain responses to natural environment stimuli Algorithms allowing for the characterization of stimulus-specific brainwave anomalies These technologies will allow for…

-

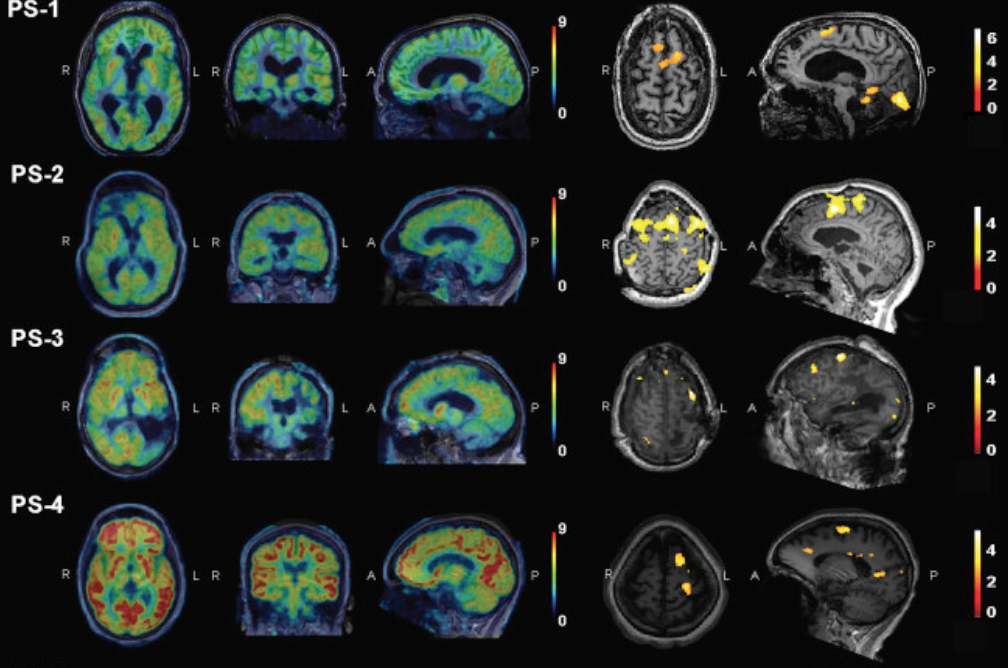

Brain scans for customized treatment

MIT‘s John Gabrieli is investigating the use of neuroimaging to predict future behavior to customize brain health treatments. Professor Gabrieli believes that neuromarkers, determined by fMRI, can be used to develop personalized interventions to improve education, health, addiction, criminal behavior and to analyze responses to drug or behavioral treatments. According to Gabrieli, “Presently, we often wait for failure,…

-

Stroke detecting headset prototype

Samsung’s Early Detection Sensor & Algorithm Package (EDSAP), developed by Se-hoon Lim, is meant to detect early signs of stroke. A multiple sensor headset records electrical impulses in the brain, algorithms determine the likelihood of a stroke in one minute, and results are presented in a mobile app. EDSAP can also analyze stress and sleep patterns, and potentially…