Category: Signal Processing

-

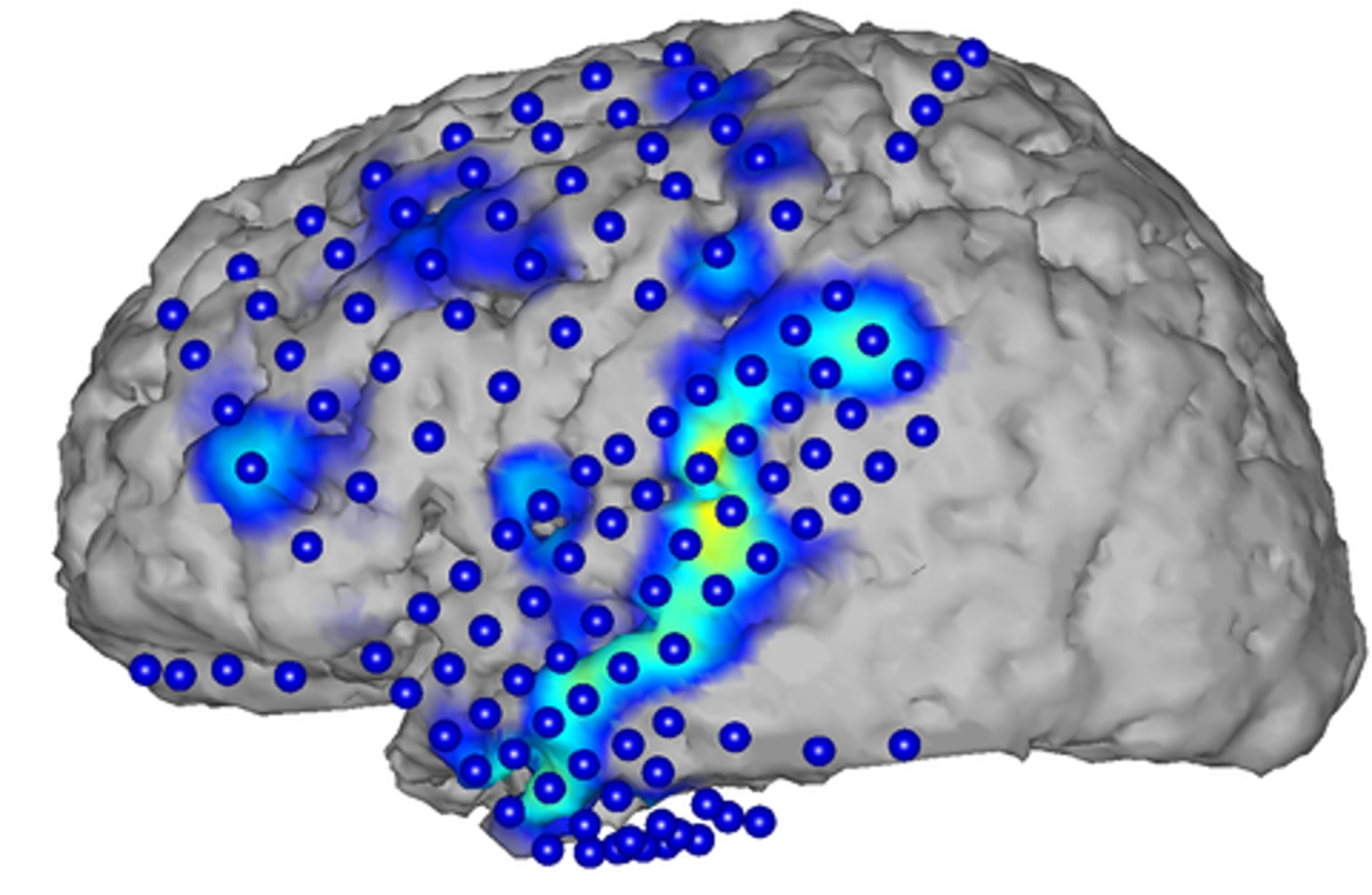

Spoken sentences recreated from brain activity patterns

KIT‘s Tanja Schultz has reconstructed spoken sentences from brain activity patterns. Speech is produced in the cerebral cortex. Associated brain waves can be recorded with surface electrodes. Schultz reconstructed basic units, words, and complete sentences from brain waves, and generated corresponding text. This was achieved by a combination of advanced signal processing and automatic speech recognition.…

-

Smartphone sensor detects cancer in breath

Professor Hossam Haick at the Technion – Israel Institute of Technology has developed a sensor equipped smartphone that screens a user’s breath for early cancer detection. SNIFFPHONE uses micro and nano sensors that read exhaled breath. The information is transferred through the phone to a signal processing system for analysis. According to Haick, the NaNose system can detect benign…

-

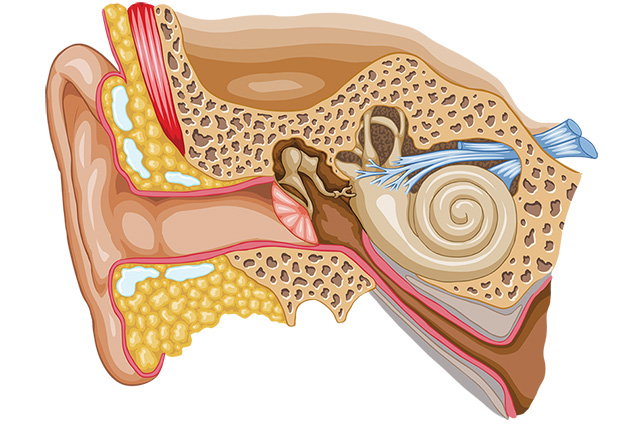

Wirelessly charged cochlear implant with no external hardware

http://web.mit.edu/newsoffice/2014/cochlear-implants-with-no-exterior-hardware-0209.html MIT scientists have developed a low power signal processing chip that could lead to a cochlear implant requiring no external hardware. Harvard Medical School and Massachusetts Eye and Ear Infirmary doctors collaborated with the researchers. The implant would be wirelessly recharged and run for eight hours. Instead of an external microphone, the implant would…

-

Brain Computer Interface – a timeline

http://www.livescience.com/37944-how-the-human-computer-interface-works-infographics.html From the Babbage Analytical Engine of 1822 through thought control – a brief history of the intersection of mind and machine.

-

Medical monitoring via webcam

http://www.economist.com/blogs/babbage/2013/03/remote-monitoring A team of researchers at Xerox is working on technology that would allow doctors to obtain patients’ vital signs using a simple webcam. Already, the team is testing use of the technology to monitor the pulse rate of premature babies and to track irregular heartbeats in patients suffering from arrhythmia. By applying further signal-processing…