Category: EEG

-

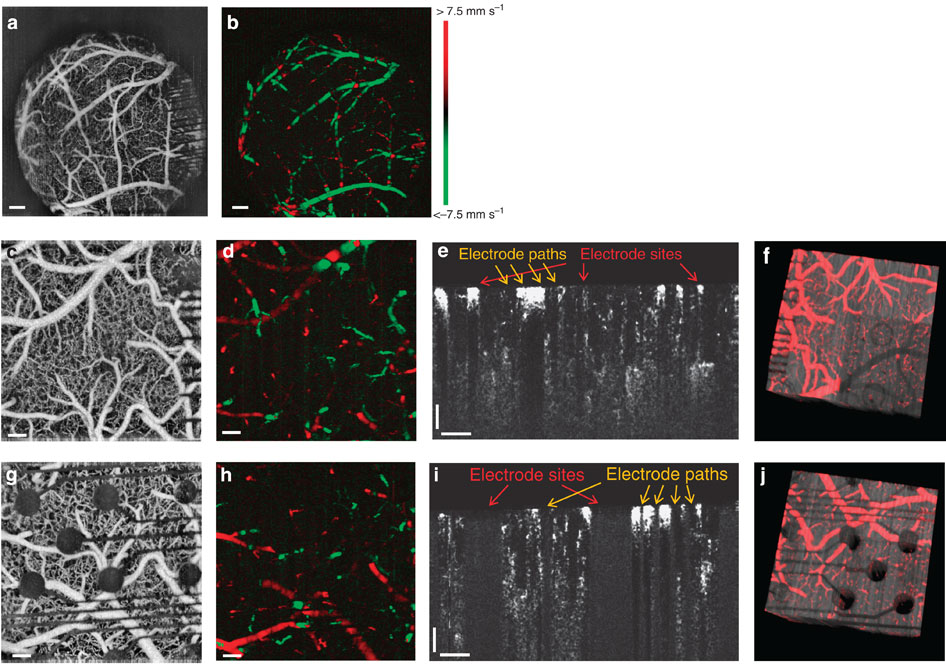

Transparent, implantable sensor improves brain monitoring

On October 23rd, ApplySci described Justin Williams‘s graphene based, transparent sensor brain implant. The Nature paper is now available online. This will redefine neural implants as it will enable better fMRI monitoring of the activity around the implant, while getting detailed activity from the area. Together with noninvasive EEG, this can help fine tune very detailed EEG…

-

EEG could lead to earlier autism diagnosis

Albert Einstein College of Medicine professor Sophie Molholm has published a paper describing the way that autistic children process sensory information, as determined by EEG. She believes that this could lead to earlier diagnosis (before symptoms of social and developmental delays emerge), hence earlier treatment, which might reduce the condition’s symptoms. EEG readings were taken from 40…

-

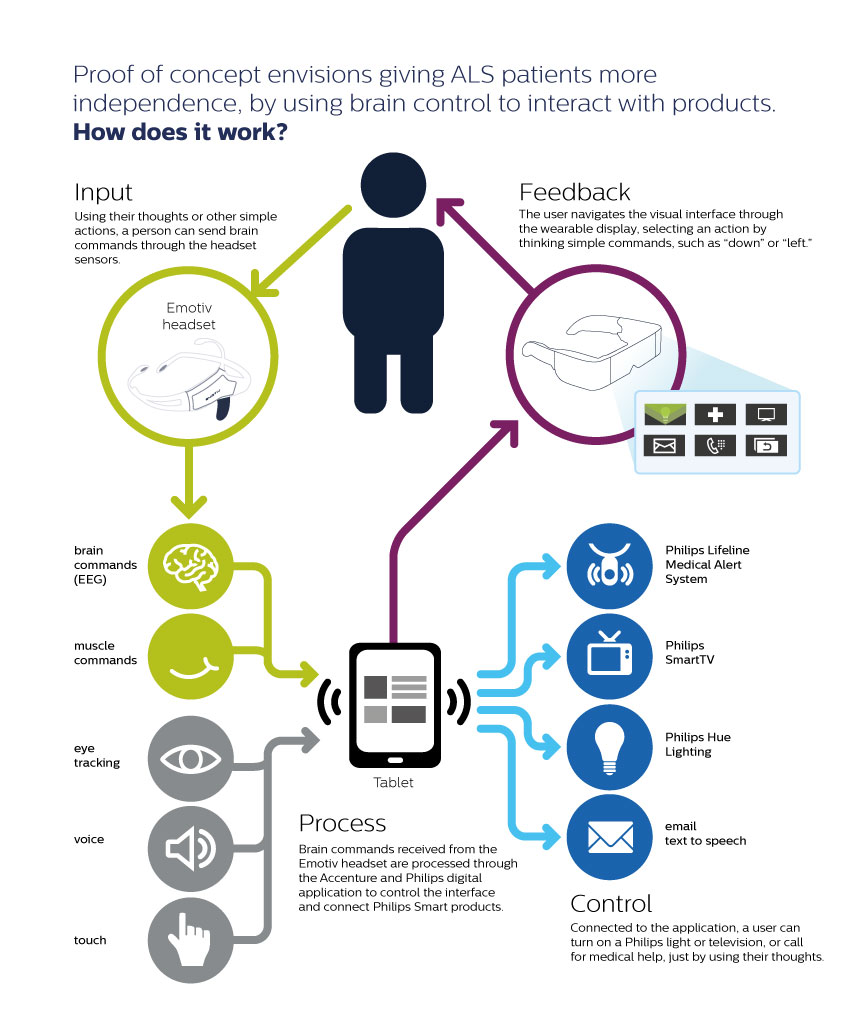

EEG enables ALS patients to control devices, communicate

Philips and Accenture are using EEG brainwaves to help ALS patients command electronic devices via a wearable display, a tablet and software. The system can access a medical alert service, a smart TV and wireless lighting, and communicate via pre-configured messages. The wearable display provides visual feedback that allows the user to navigate the application…

-

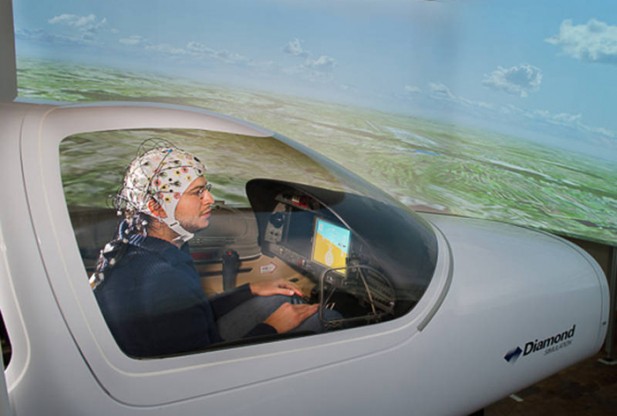

Brainflight project for BCI enabled flying

Professor Florian Holzapfel and colleagues at the Institute of Flight System Dynamics of the Technische Universität München have demonstrated the feasibility of flying via brain control. Brainwaves of the pilots are measured with EEG electrodes connected to a cap. An algorithm developed by Team PhyPa at the Berlin Institute of Technology deciphers electrical potentials and converts them into…

-

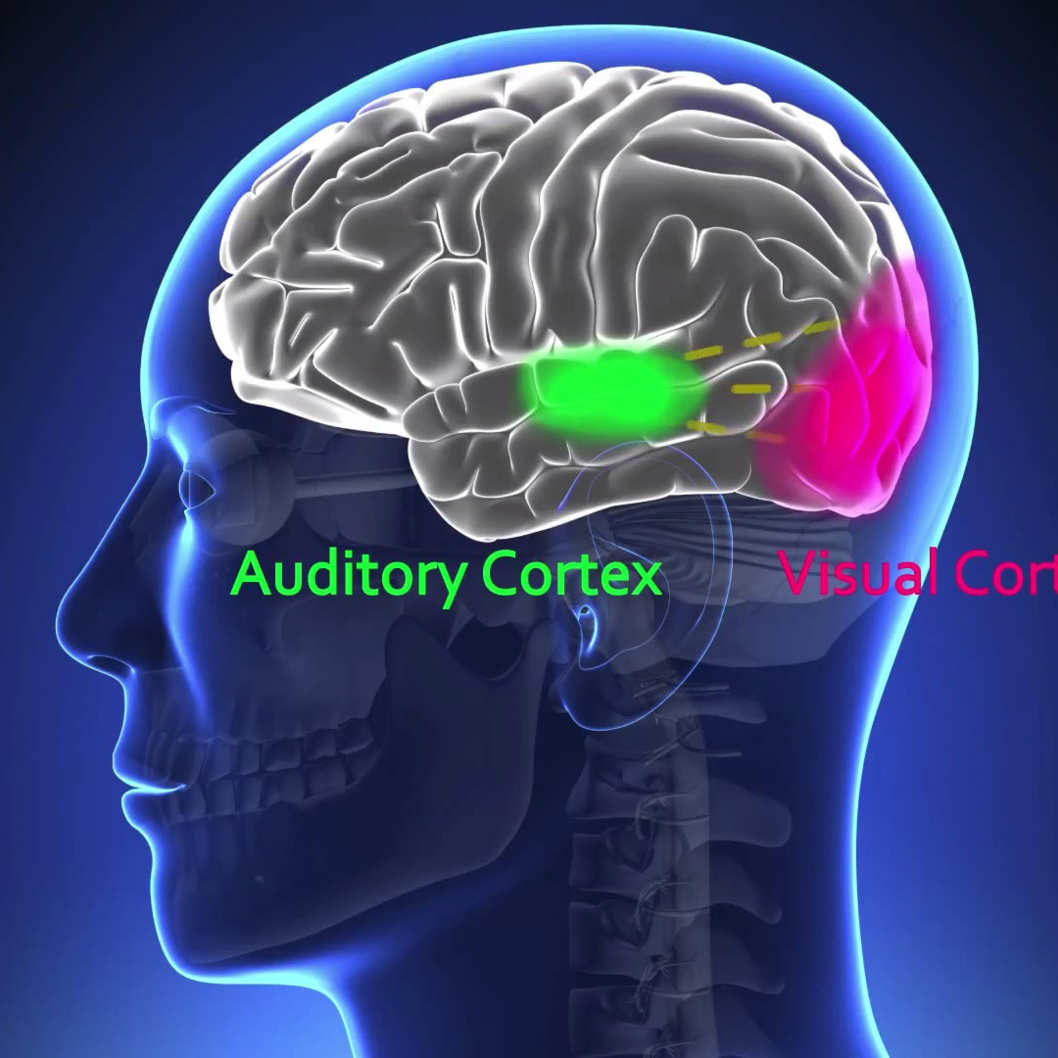

Ultrasound stimulation enhanced sensory performance in human brain

http://www.nature.com/neuro/journal/vaop/ncurrent/full/nn.3620.html Virginia Tech Carilion Research Institute scientists, led by Professor William Tyler, have demonstrated that ultrasound directed to a specific region of the brain can boost performance in sensory discrimination. This is the first example of low-intensity, transcranial-focused ultrasound modulating human brain activity to enhance perception. The scientists delivered focused ultrasound to an area of the cerebral…

-

Biofeedback tool identifies seizure brain patterns through music

http://news.stanford.edu/news/2013/september/seizure-music-research-092413.html In a recent experiment, Stanford professors Chris Chafe and Josef Parvizi created audio EEG recordings of both normal brain activity and seizure states. During the state of seizure, tones became more pronounced and their tempo became chaotic. “We could instantly differentiate seizure activity from non-seizure states with just our ears,” Chafe said. “It was…

-

Non-invasive EEG ear device to detect seizures

http://www.technologyreview.com/news/518356/device-could-spot-seizures-by-reading-brainwaves-through-the-ear/ Danilo Mandic at Imperial College in London has developed an EEG device that can be worn inside the ear, like a hearing aid. It will enable scientists to record EEGs for several days at a time, allowing doctors to monitor patients who have recurring problems such as seizures or microsleep. By nestling the EEG inside…

-

FDA approves EEG-based device to diagnose ADHD

http://www.fda.gov/NewsEvents/Newsroom/PressAnnouncements/ucm360811.htm US regulators have approved a device that analyzes brain activity to help confirm a diagnosis of attention deficit hyperactivity disorder in children ages 6-17. It records different kinds of electrical impulses given off by neurons in the brain and the frequency the impulses are given off each second. The EEG based, Neuropsychiatric EEG-Based Assessment Aid test is…

-

Thought-controlled flying robot

http://www.livescience.com/27849-mind-controlled-devices-brain-awareness-nsf.html University of Minnesota researchers led by Professor Bin He have been able to control a small helicopter using only their minds, pushing the potential of technology that could help paralyzed or motion-impaired people interact with the world around them. An EEG cap with 64 electrodes was put on head of the person controlling the helicopter.…

-

Skin mounted electrode arrays measure neural signals

http://coleman.ucsd.edu/lab-research/ Professor Todd Coleman of UCSD is developing foldable, stretchable electrode arrays that can non-invasively measure neural signals. They can also provide more in-depth analysis by including thermal sensors to monitor skin temperature and light detectors to analyze blood oxygen levels. The device is powered by micro solar panels and uses antennae to wirelessly transmit or…

-

EPSRC funds 15 creative healthcare engineering projects

http://www.epsrc.ac.uk/newsevents/news/2013/Pages/enghealthcare.aspx The EPSRC is funding technologies in three health areas: 1. Medical Imaging. Projects include technology which could: -lead to better diagnosis and treatment for epilepsy, multiple sclerosis, depression, dementia as well as breast cancers and osteoporosis -reduce risks during brain surgery by creating ultrasound devices in needles -improve therapies for brain injured patients and…