Category: BCI

-

Brain-spine interface allows paraplegic man to walk

EPFL professor Grégoire Courtine has created a “digital bridge” which has allowed a man whose spinal cord damage left him with paraplegia, to walk. The brain–spine interface builds on previous work, which combined intensive training and a lower spine stimulation implant. Gert-Jan Oskam participated in this trial, but stopped improving after three years. The new…

-

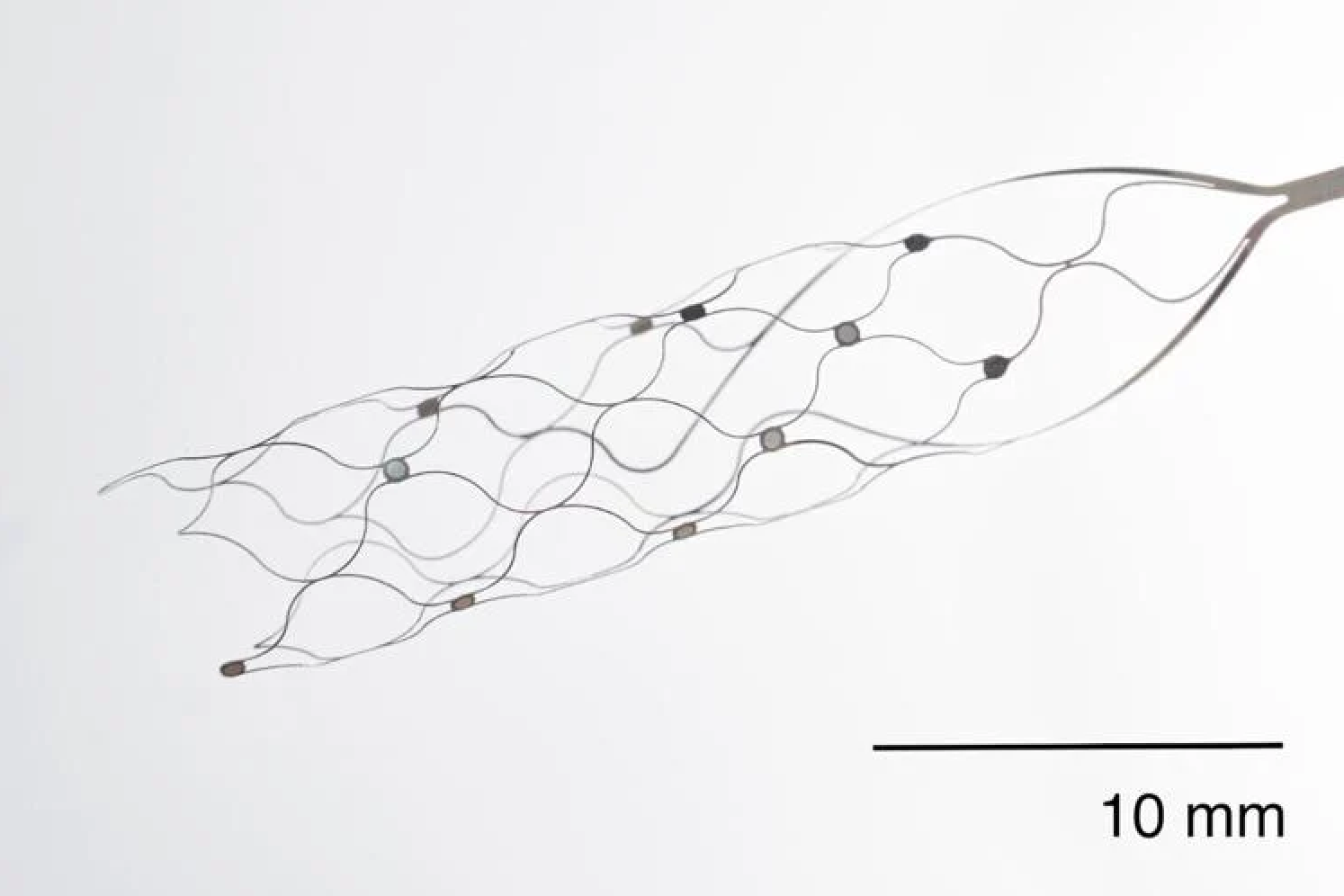

First US patient receives Synchron endovascular BCI implant

On July 6, 2022, Mount Sinai’s Shahram Majidi threaded Synchron‘s 1.5-inch-long, wire and electrode implant into a blood vessel in the brain of a patient with ALS. The goal is for the patient, who cannot speak or move, to be able to surf the web and communicate via email and text, with his thoughts. Four…

-

Shallow implant plus precise stimulation startup aims to treat depression

Inner Cosmos is a new, shallowly implanted brain stimulation system meant to address depression. It calls its system a “digital pill” but still requires a procedure for electronics to be placed under the skin on the head. Chief Medical Officer Eric Leuthardt is a top neurosurgeon from Washington University in St Louis and the CEO…

-

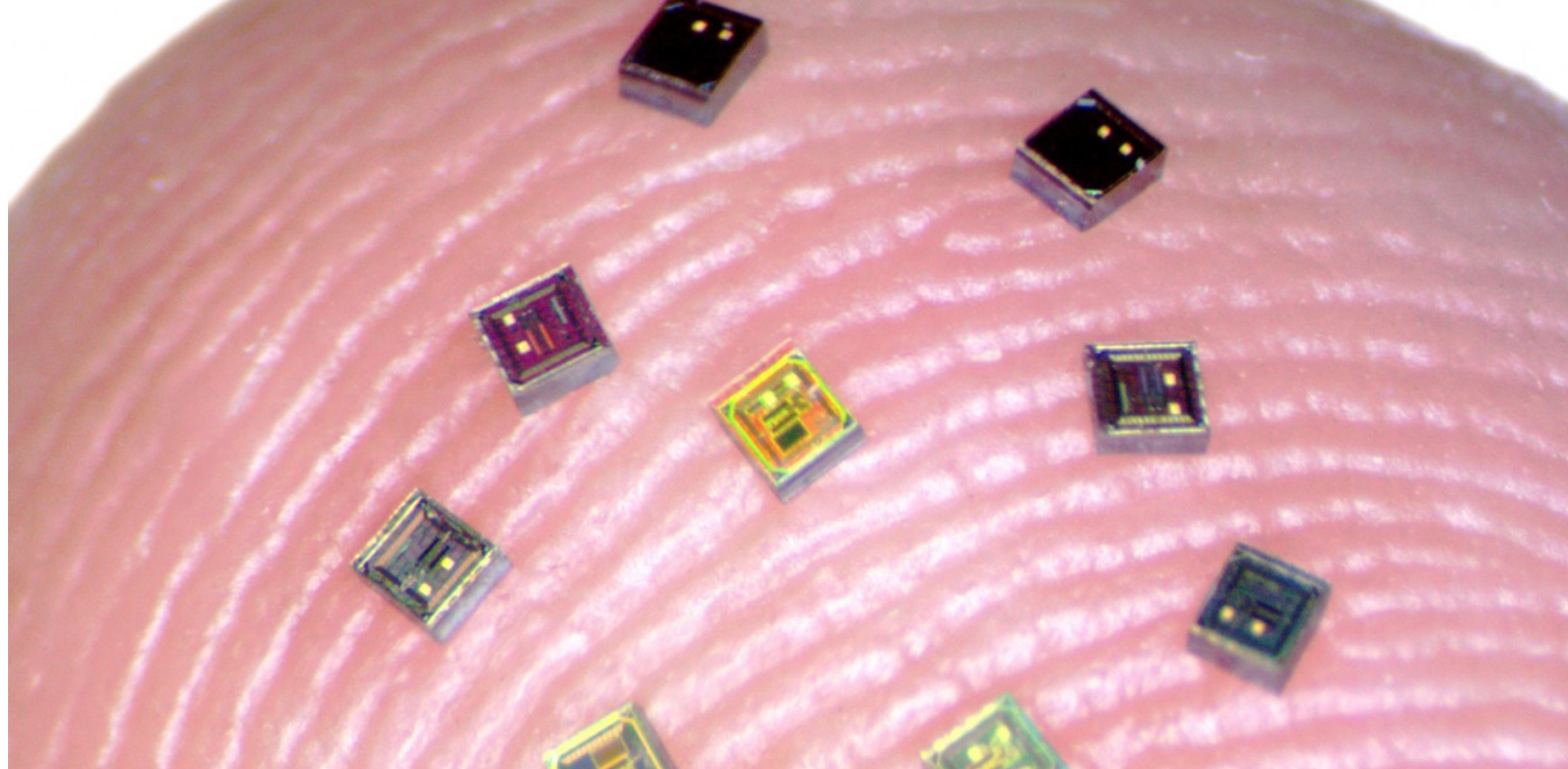

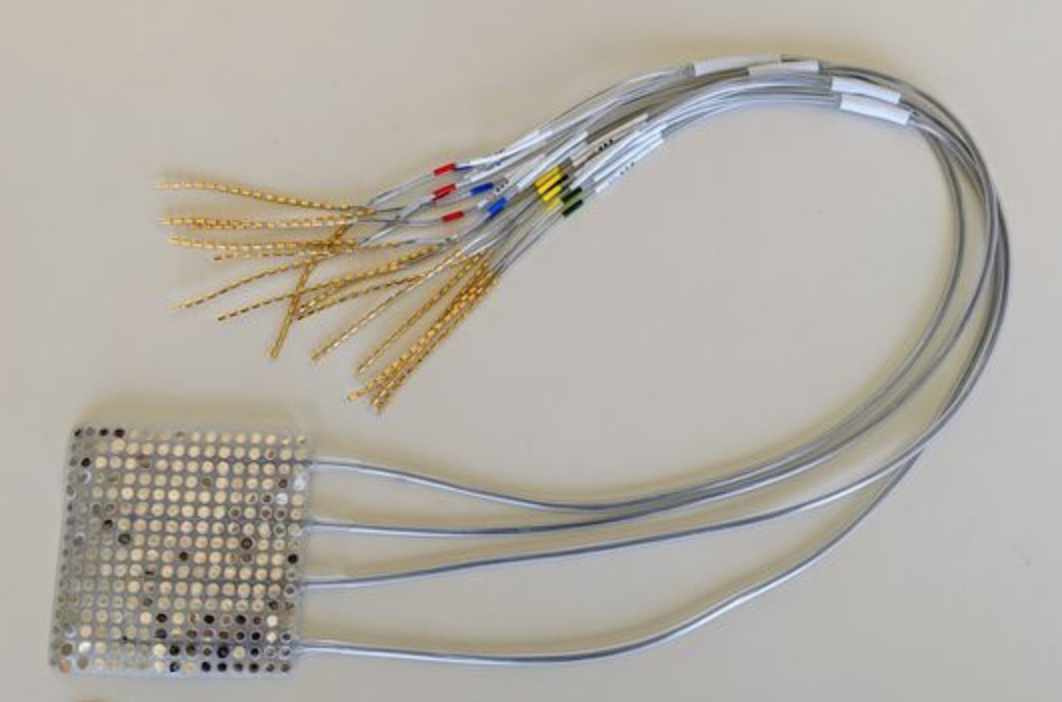

Nurmikko’s Neurograins can enable unprecedented brain signal recording detail, new therapies

Arto Nurmikko and Brown colleagues have developed BCI system which employs a coordinated network of independent, wireless microscale neural sensors, to record and stimulate brain activity. “Neurograins” independently record electrical pulses made by firing neurons and send the signals wirelessly to a central hub, which coordinates and processes the signals. In a recent Nature paper,…

-

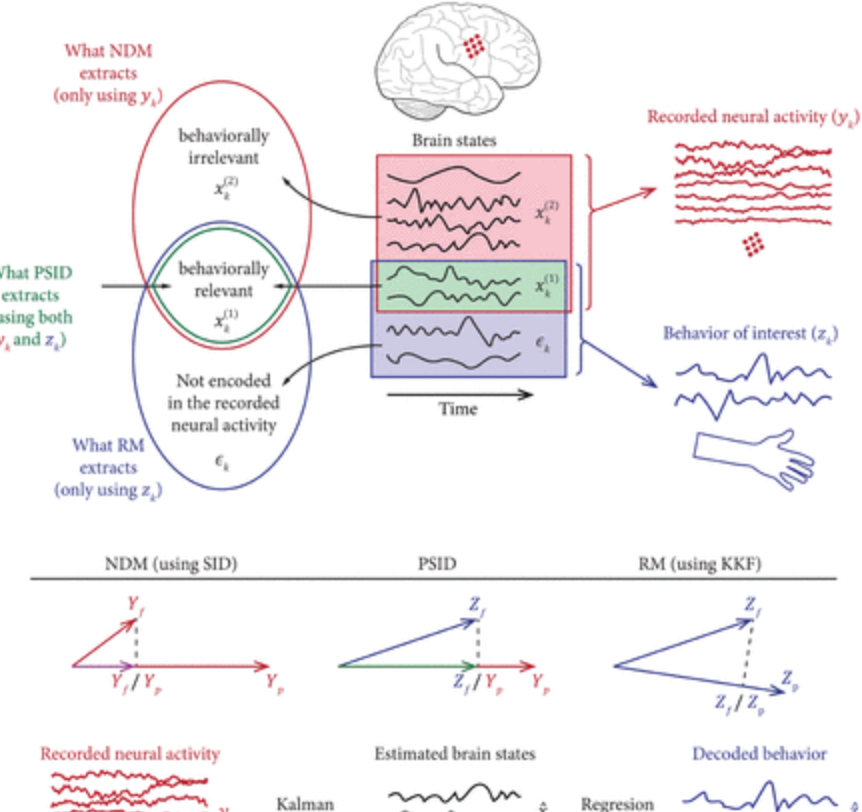

Algorithm isolates specific brain signals, provides feedback

The US Army and USC Prof Maryam Shanechi have developed an algorithm that can determine which specific behaviors—like walking and breathing—belong to specific brain signals. Segmenting brain signals has been notoriously difficult, as all signals associated with tasks mix together. Shanechi and her team used the algorithm to separate behaviorally relevant brain signals from behaviorally…

-

Polymer improves medical implants, could enable brain-computer interface

David Martin and University of Delaware colleagues have developed a bio-synthetic coating for electronic components that could avoid the scarring (and signal disruption) caused by traditional microelectric materials. The PEDOT polymer improved the performance of medical implants by reducing their opposition to an electric current. Pedot film was used with an antibody to stimulate blood…

-

Facebook’s Mark Chevillet on Brain-Computer-Interfaces

Mark Chevillet’s recent talk at the ApplySci Silicon Valley conference, called “Imagining a new Interface: Hands-free Communication With Out Saying a Word” is now live on the ApplySci YouTube Channel. Join ApplySci at Deep Tech Health + Neurotech Boston on September 24, 2020 at MIT

-

Implanted electrodes + algorithm allow thought-driven 4 limb exoskeleton control

Alim Louis Benabid and Clinatec/University of Grenoble colleagues have developed a brain computer interface controlled exoskeleton that enabled a tetraplegic man to walk and move his arms. Two 64 electrode brain implants drove the system. Benabid explained the benefits, stating that “previous brain-computer studies have used more invasive recording devices implanted beneath the outermost membrane of the…

-

CTRL-Labs acquired by Facebook for 500M – 1B

Congratulations to CTRL-Labs and Lux Capital on Facebook’s acquisition of the four year old Neurotech startup. The company, whose technology assists in decoding brain activity and intention, will join Facebook’s AR/VR team. CTRL-Labs participated in a recent ApplySci panel of startups at Stanford led by Lux Capital’s Shahin Farshchi. Facebook presented its Brain Computer Interface work at the…

-

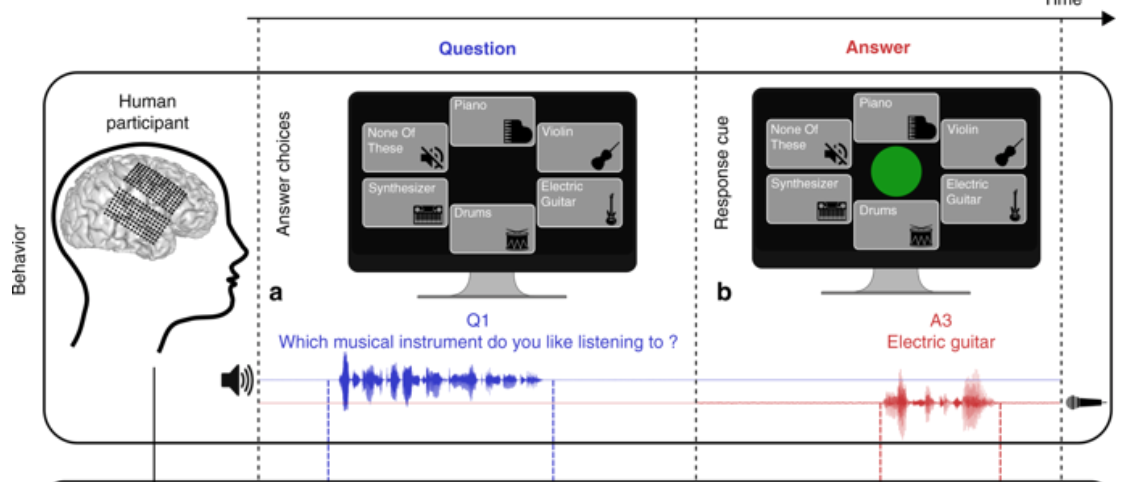

BCI reads whole words from thoughts; no virtual keyboard necessary

Edward Chang at UCSF, Mark Chevillet at Facebook, and colleagues, have published a study where implanted electrodes were used to “read” whole words from thoughts. Previous technology required users to spell words with a virtual keyboard. Subjects listened to multiple-choice questions and spoke answers aloud. An electrode array recorded activity in parts of the brain…

-

Study: Noninvasive BCI improves function in paraplegia

Miguel Nicolelis has developed a non-invasive system for lower-limb neurorehabilitation. Study subjects wore an EEG headset to record brain activity and detect movement intention. Eight electrodes were attached to each leg, stimulating muscles involved in walking. After training, patients used their own brain activity to send electric impulses to their leg muscles, imposing a physiological gait. With…

-

Thought generated speech

Edward Chang and UCSF colleagues are developing technology that will translate signals from the brain into synthetic speech. The research team believes that the sounds would be nearly as sharp and normal as a real person’s voice. Sounds made by the human lips, jaw, tongue and larynx would be simulated. The goal is a communication method for…