Category: Robotics

-

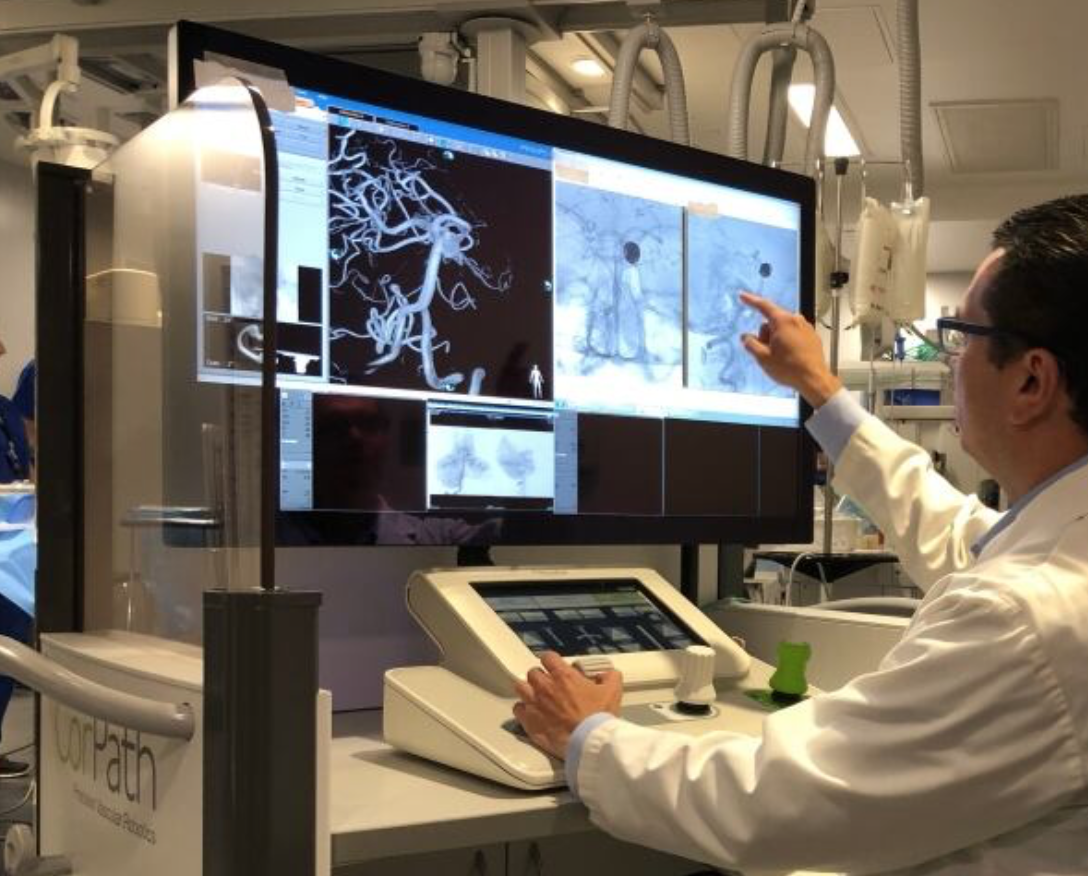

Remote, robotic surgery for aneurysm, stroke

Vitor Mendes Pereira at Toronto Western Hospital and Krembil Brain Institute .used a Siemens Healthineers-developed robot arm to help remove an aneurysm. A catheter was guided to the patient’s brain from an incision made near the groin in the interventional procedure. The CorPath GRX robotics platform is controlled by joysticks and a touchscreen. A bedside technician…

-

Robot helps autistic kids engage

Georgia Tech professor Ayanna Howard is using interactive robots to help autistic kids engage with others, socially and emotionally. Her company, Zyrobotics, is commericalizing this technology. In a study, 18 kids, between the ages of 4 and 12, five of whom had autism, interacted with two robots which expressed 20 emotional states, including boredom, excitement, and…

-

Sensor glove identifies objects

MIT’s Subramanian Sundaram has developed a sensor glove that identifies objects through touch. This could improve assistive robot performance and enhance prosthetic design.The cheap “scalable tactile glove” includes 550 tiny, pressure-capturing sensors. A neural network uses the data to classify objects and predict their weights. No visual input is required. In a Nature paper, the system…

-

Thought, gesture-controlled robots

MIT CSAIL’s Daniela Rus has developed an EEG/EMG robot control system based on brain signals and finger gestures. Building on the team’s previous brain-controlled robot work, the new system detects, in real-time, if a person notices a robot’s error. Muscle activity measurement enables the use of hand gestures to select the correct option. According to…

-

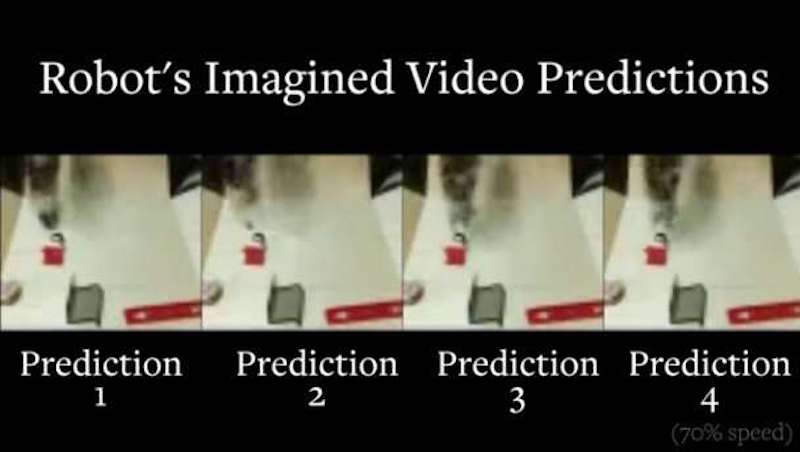

Robots visualize actions, plan, with out human instruction

Sergey Levine and UC Berkeley colleagues have developed robotic learning technology that enables robots to visualize how different behaviors will affect the world around them, with out human instruction. This ability to plan, in various scenarios, could improve self-driving cars and robotic home assistants. Visual foresight allows robots to predict what their cameras will see if they perform…

-

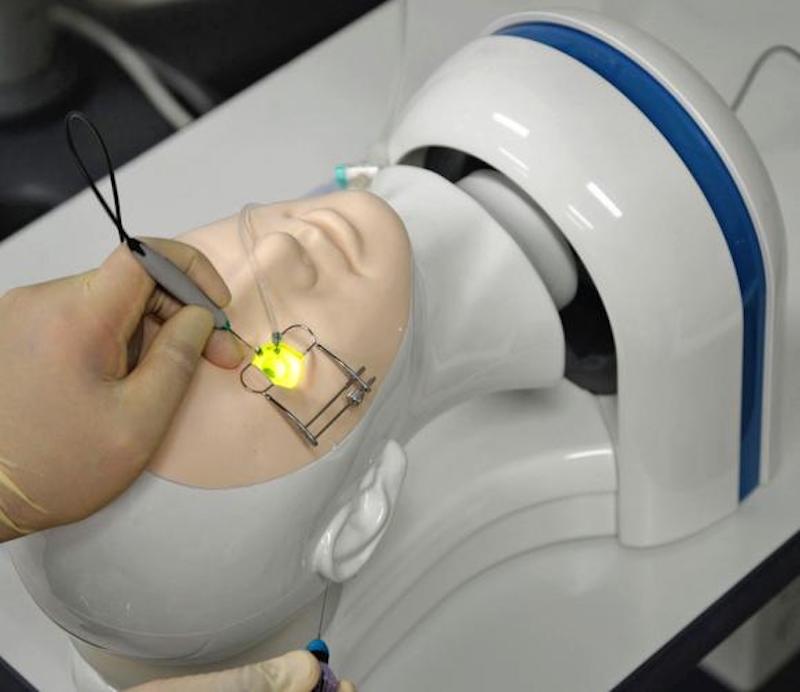

Robot “patients” for medical research, training

In addition to robots with increasingly human-like faces, being used as companions, “patient” robots are being developed to test medical equipment and procedures on babies and adults. Yoshio Matsumoto and AIST colleagues created a robotic skeletal structure of the lower half of the body, with 22 moveable joints. Its skeleton is made of metal, and its skin,…

-

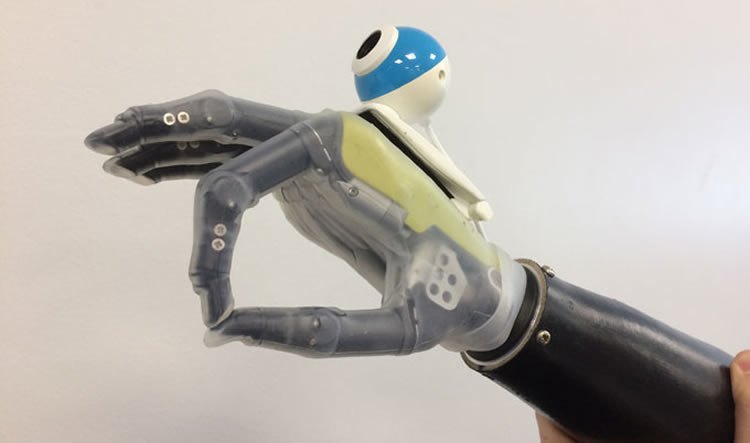

Deep learning driven prosthetic hand + camera recognize, automate required grips

Ghazal Ghazai and Newcastle University colleagues have developed a deep learning driven prosthetic hand + camera system that allow wearers to reach for objects automatically. Current prosthetic hands are controlled via a user’s myoelectric signals, requiring learning, practice, concentration and time. A convolutional neural network was trained it with images of 500 graspable objects, and taught to recognize…

-

Solar powered, highly sensitive, graphene “skin” for robots, prosthetics

Professor Ravinder Dahiya, at the University of Glasgow, has created a robotic hand with solar-powered graphene “skin” that he claims is more sensitive than a human hand. The flexible, tactile, energy autonomous “skin” could be used in health monitoring wearables and in prosthetics, reducing the need for external chargers. (Dahiya is now developing a low-cost…

-

Robots support neural and physical rehab in stroke, cerebral palsy

Georgia Tech’s Ayanna Howard has developed Darwin, a socially interactive robot that encourages children to play an active role in physical therapy. Specific targeting children with cerebral palsy (who are involved in current studies), autism, or tbi, the robot is designed to function in the home, to supplement services provided by clinicians. It engages users…

-

Sensors + robotics + AI for safer aging in place

IBM and rice University are developing MERA — a Waston enabled robot meant to help seniors age in place. The system comprises a Pepper robot interface, Watson, and Rice’s CameraVitals project, which calculates vital signs by recording video of a person’s face. Vitals are measured multiple times each day. Caregivers are informed if the the camera and/or accelerometer detect…

-

Robotic hand exoskeleton for stroke patients

ETH professor Roger Gassert has developed a robotic exoskeleton that allows stroke patients to perform daily activities by supporting motor and somatosensory functions. His vision is that “instead of performing exercises in an abstract situation at the clinic, patients will be able to integrate them into their daily life at home, supported by a robot.”…

-

AI robot learns ward procedures, advises nurses

Julie Shah and MIT CSAIL colleagues have developed a robot to assist labor nurses. The AI driven assistant learns how the unit works from people, and is then able to make care recommendations, including scheduling and patient movement. Labor nurses attempt to predict the arrival and length of labor, and which patients will require a…