Category: Prosthetics

-

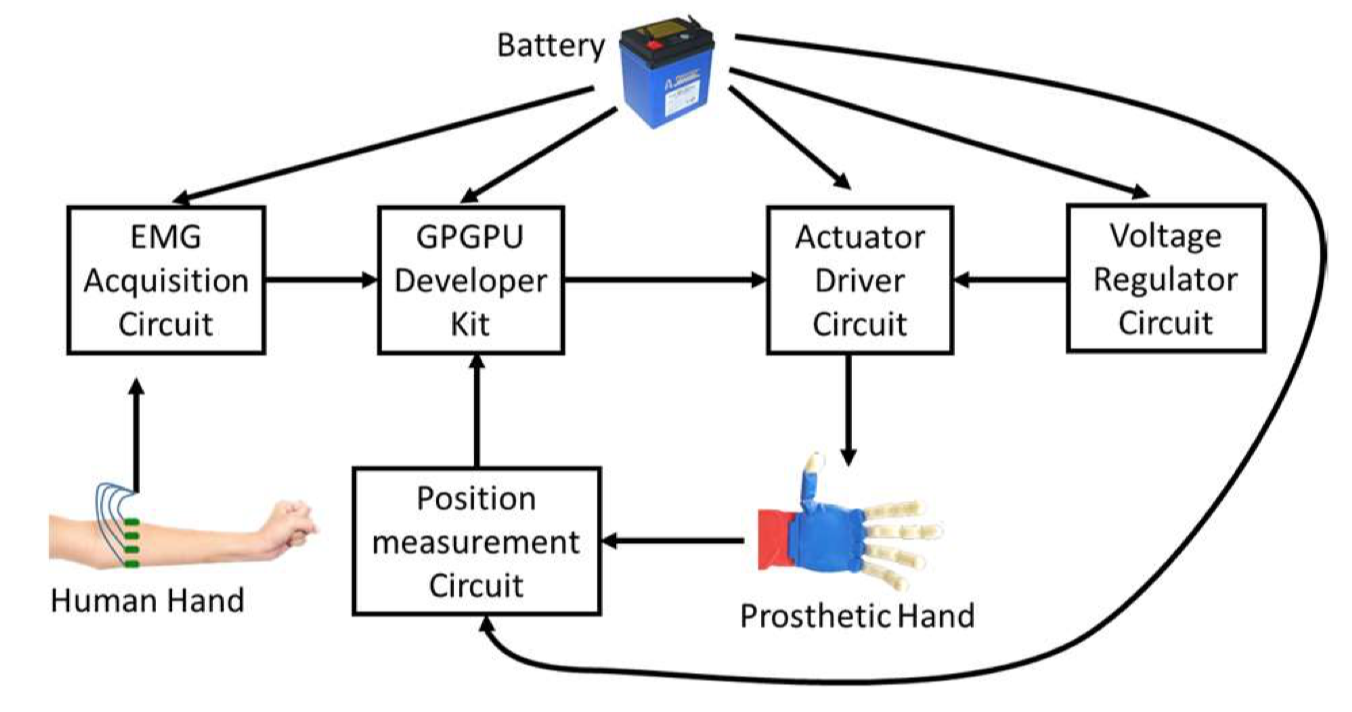

AI/EMG system improves prosthetic hand control

UT Dallas researchers Mohsen Jafarzadeh, Yonas Tadesse, and colleagues are using AI to control prosthetic hands with raw EMG signals. The real-time convolutional neural network, which does not require preprocessing, results in faster and more accurate data classification and faster hand movements. User data re-trains the system to personalize actions. Join ApplySci at the 12th Wearable Tech…

-

Sensor glove identifies objects

MIT’s Subramanian Sundaram has developed a sensor glove that identifies objects through touch. This could improve assistive robot performance and enhance prosthetic design.The cheap “scalable tactile glove” includes 550 tiny, pressure-capturing sensors. A neural network uses the data to classify objects and predict their weights. No visual input is required. In a Nature paper, the system…

-

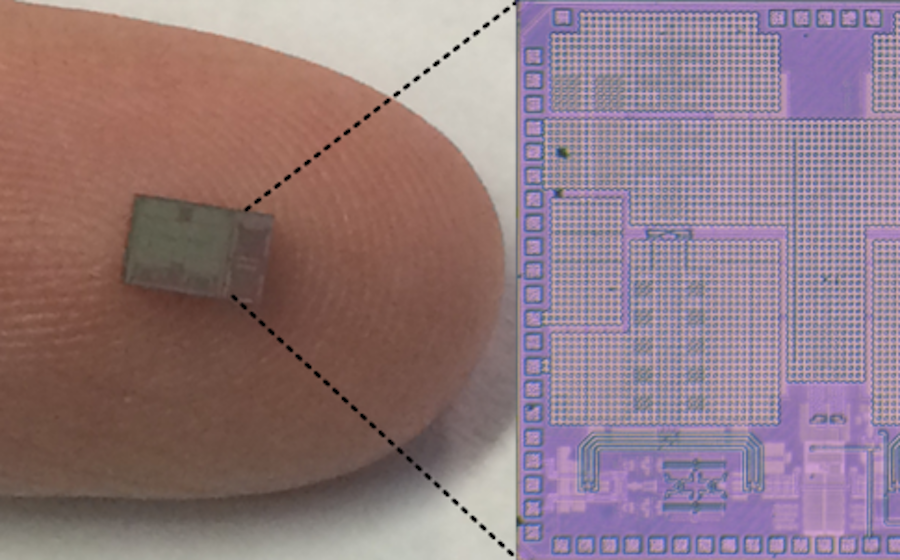

“Artificial nerve” system for sensory prosthetics, robots

Stanford’s Zhenan Bao has developed an artificial sensory nerve system that can activate the twitch reflex in a cockroach and identify letters in the Braille alphabet. Bao describes it as “a step toward making skin-like sensory neural networks for all sorts of applications” which would include artificial skin that creates a sense of touch in prosthetics. The artificial…

-

DARPA’s Justin Sanchez on driving and reshaping biotechnology | ApplySci @ Stanford

DARPA Biological Technologies Office Director Dr. Justin Sanchez on driving and reshaping biotechnology. Recorded at ApplySci’s Wearable Tech + Digital Health + Neurotech Silicon Valley conference on February 26-27, 2018 at Stanford University. Join ApplySci at the 9th Wearable Tech + Digital Health + Neurotech Boston conference on September 25, 2018 at the MIT Media…

-

Phillip Alvelda: More intelligent; less artificial | ApplySci @ Stanford

Cortical founder and former DARPA NESD program manager Phillip Alvelda discusses AI and the brain at ApplySci’s Wearable Tech + Digital Health + Neurotech Silicon Valley conference on February 26-27, 2018 at Stanford University: Join ApplySci at the 9th Wearable Tech + Digital Health + Neurotech Boston conference – September 25, 2018 at the MIT Media…

-

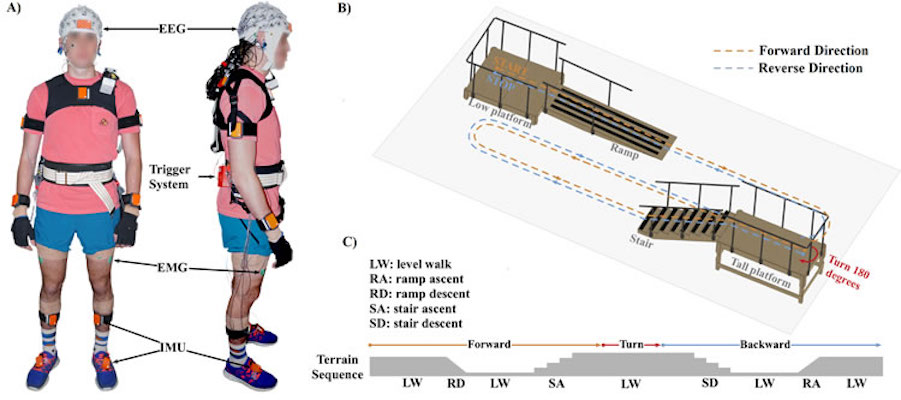

Closed loop EEG/BCI/VR/physical therapy system to control gait, prosthetics

Earlier this year, University of Houston’s Jose Luis Contreras-Vidal developed a closed-loop BCI/EEG/VR/physical therapy system to control gait as part of a stroke/spinal cord injury rehab program. The goal was to promote and enhance cortical involvement during walking. In a study, 8 subjects walked on a treadmill while watching an avatar and wearing a 64…

-

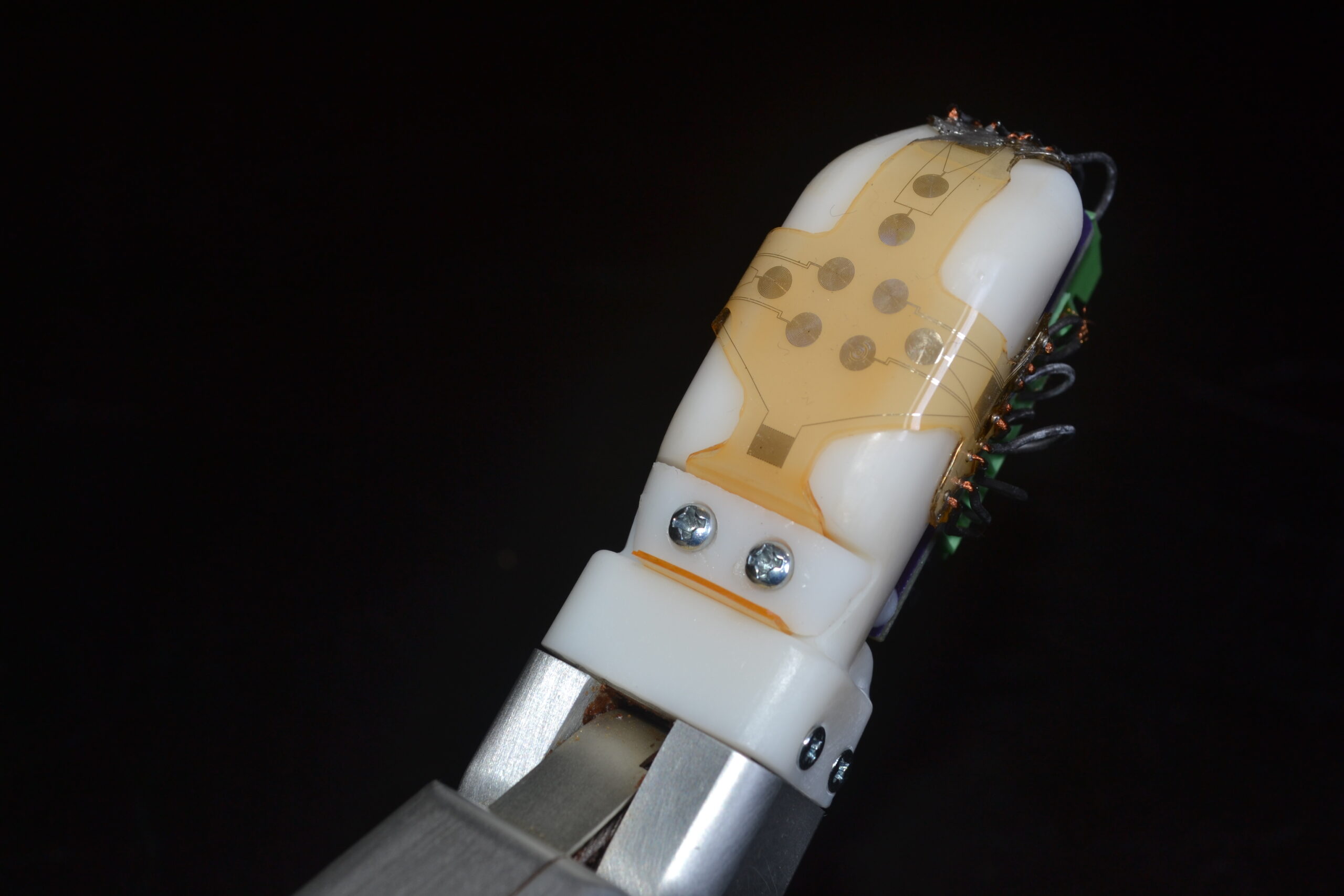

Prosthetic “skin” senses force, vibration

Jonathan Posner, with University of Washington and UCLA colleagues, has developed a flexible sensor “skin” that can be stretched over prostheses to determine force and vibration. The skin mimics the way a human finger responds to tension and compression, as it slides along a surface or distinguishes among different textures. This could allow users to sense when…

-

Sensor-embedded prosthetic monitors gait, detects infection

Jerome Lynch, with ONR and Walter Reed researchers, has developed a “smart” prosthetic leg, with embedded sensors that monitor a wearer’s gait, the condition of the device, and the risk of infection. The Monitoring OsseoIntegrated Prostheses uses a limb which includes a titanium fixture surgically implanted into the femur. Bone grows at the implant’s connection…

-

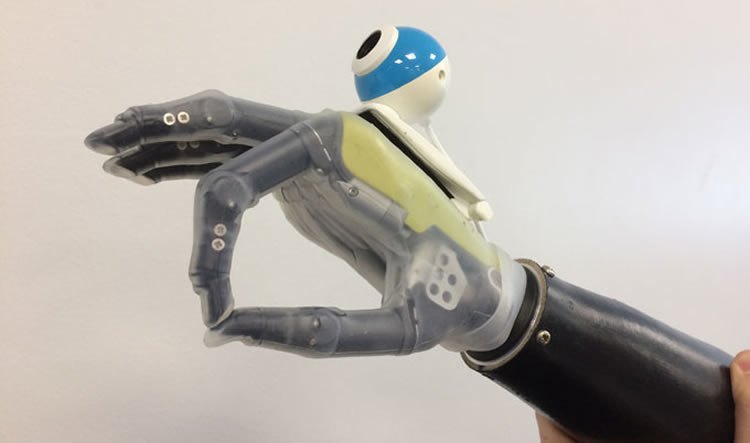

Deep learning driven prosthetic hand + camera recognize, automate required grips

Ghazal Ghazai and Newcastle University colleagues have developed a deep learning driven prosthetic hand + camera system that allow wearers to reach for objects automatically. Current prosthetic hands are controlled via a user’s myoelectric signals, requiring learning, practice, concentration and time. A convolutional neural network was trained it with images of 500 graspable objects, and taught to recognize…

-

Solar powered, highly sensitive, graphene “skin” for robots, prosthetics

Professor Ravinder Dahiya, at the University of Glasgow, has created a robotic hand with solar-powered graphene “skin” that he claims is more sensitive than a human hand. The flexible, tactile, energy autonomous “skin” could be used in health monitoring wearables and in prosthetics, reducing the need for external chargers. (Dahiya is now developing a low-cost…

-

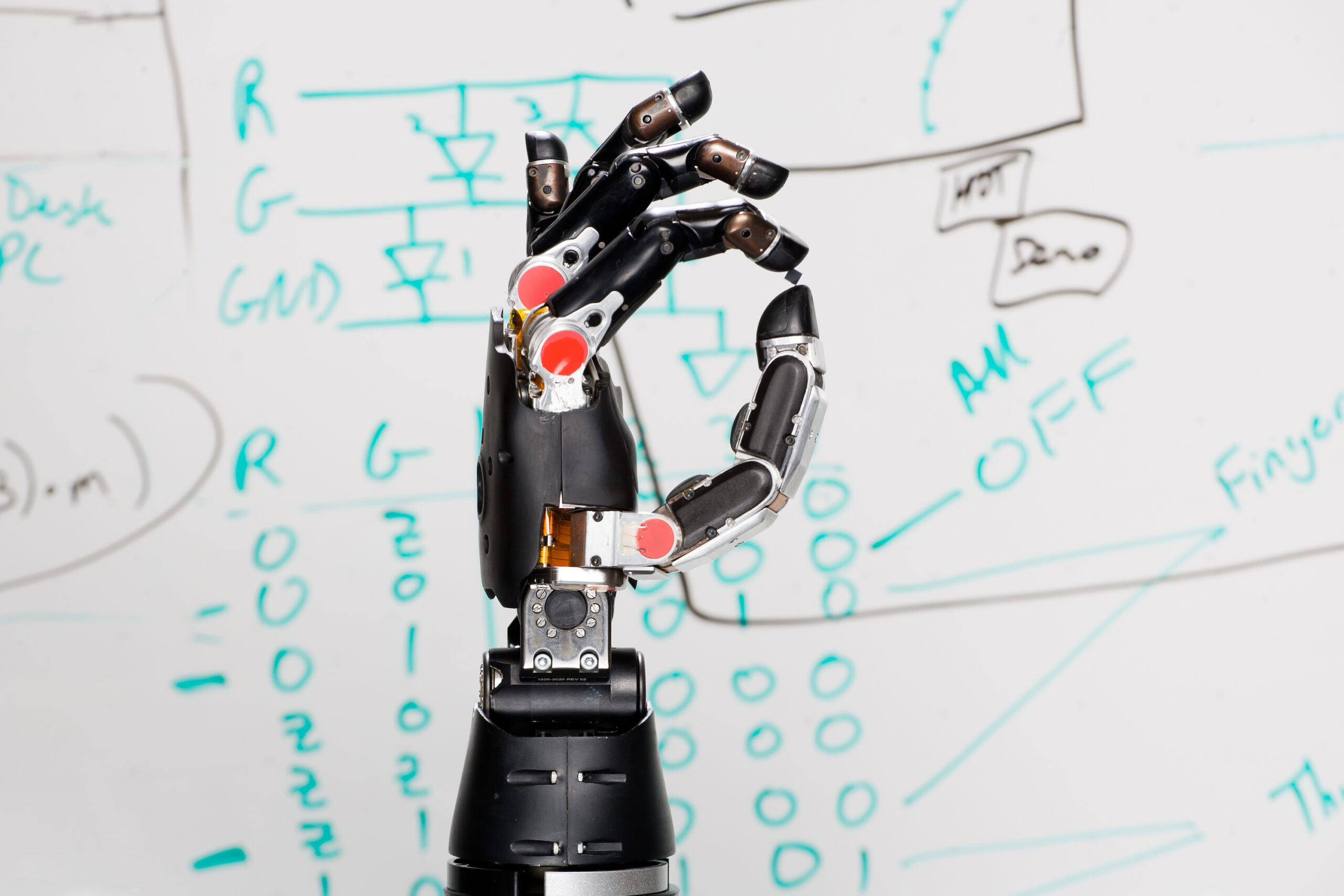

Thought controlled prosthetic arm has human-like movement, strength

This week at the Pentagon, Johnny Matheny unveiled his DARPA developed prosthetic arm. The mind-controlled prosthesis has the same size, weight, shape and grip strength of a human arm, and, according to Matheny, can do anything one can do. It is, by all accounts, the most advanced prosthetic limb created to date. The 100 sensor arm…

-

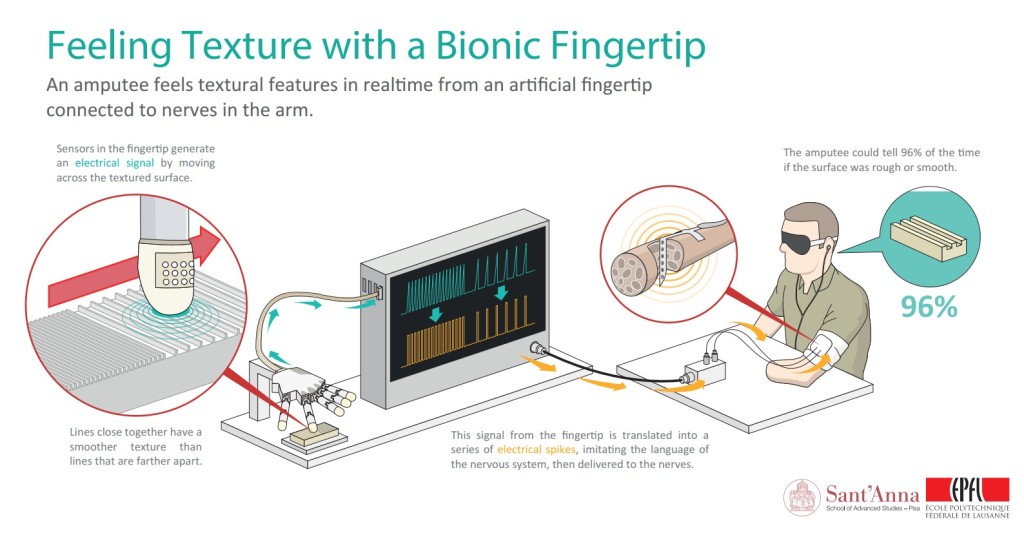

Bionic finger, implanted electrodes, enable amputee to “feel” texture

Swiss Federal Institute of Technology and Scuola Superiore Sant’Anna researchers have developed a bionic fingertip that allows amputees to feel textures and differentiate between rough and smooth surfaces. Electrodes were surgically implanted into the upper arm of a man whose arm had been amputated below the elbow. A machine moved an artificial finger, wired with electrodes, across…